Welcome to the Quantum Universe

An oscilloscope trace from the Bevatron, a massive particle accelerator at the Lawrence Berkeley National Laboratory.

Suppose that the everyday world we experience through our senses is but a tiny, impoverished fragment of a stupendously greater realm, one that is incomparably rich, exuberantly dynamic and bewilderingly alien. Envisage a domain of boundless possibilities, of subtle convolutions of form and substance, distributed all around us and inside us, flowing out into infinite dimensions beyond our ken and even beyond the reach of our imagination. That dazzling, gargantuan domain is in fact the world in which we are already inescapably embedded, but from which we are almost totally shut out. Access to this alien world is attained only through infinitesimal portals, observational pinholes that afford but momentary glimpses of a seething wonderland of restless activity, vaster than all the universe we see, vaster even than all possible universes we can comprehend, vaster indeed than all conceivable vastness. Welcome to the quantum universe.

And immediately we hit a foundational question: Is this magical metaverse merely an abstraction, a mathematical frolic of interest only to physicists and philosophers, or does it in some sense really exist? That question—what is real?—goes right to the heart of the quantum story, and indeed of the entire scientific enterprise.

To ease ourselves into this weighty topic, let’s start with a brief anecdote. When I was 15, my sister’s boyfriend saw a ghost. At least, that’s what he claimed, having spent the night sleeping downstairs in our living room, which we children long believed was haunted. My mother was skeptical, however. “He was probably just dreaming,” was her opinion, “or drunk.” I preferred the ghost story. Who was right? Was there “really” a ghost in our living room or was the whole affair just a figment of the young man’s imagination?

Each of us experiences a world “out there,” which we view through our eyes and interpret via the information-processing taking place in our brains. Reality, for any one of us, is a product of the external and internal, of matter and mind. To get around this, a long list of philosophers, from Aristotle and René Descartes to John Locke and Thomas Nagel, defended the notion of objective reality—a physical universe that exists independently of our individual observations, and which has done so since long before human observers appeared on the scene. It is a world made of material objects that move and change in response to various forces. That, at least, is the normal view of existence adopted by Western thought. And yet, as I shall explain, quantum mechanics confounds this simplistic version of an objective, independent reality. There is indeed a world “out there,” but it is far stranger than most people imagine, or, in fact, can imagine.

Reality is a slippery concept, about which entire volumes have been penned by eminent thinkers over the centuries. Most of us, however, make do with a rough-and-ready notion that goes something like this: If an entity is real, then its existence could, in principle, be confirmed by someone else. It should be “independently verifiable by a disinterested investigator,” to put it formally. It’s a sentiment well encapsulated in the motto of Britain’s national scientific academy, the Royal Society: Nullius in verba—Take nobody’s word for it. For the two and a half centuries after the Society’s founding in 1660, that pragmatic assumption accorded with the common-sense view that there are definite facts about the world, whether or not anybody is checking. Thus, science took as its basis objective truth, as opposed to personal subjective experience that might include dreaming, hallucination, hypnotic suggestion, mirages and, well, ghosts. It therefore came as a bombshell to scientists when, early in the twentieth century, a new theory emerged, which shattered this comforting belief by implying that the external world lacks definite objective existence when it isn’t being watched.

On January 27, 1926, the world changed forever. It was on that day that the German scientific journal Annalen der Physik published a paper by the Austrian physicist Erwin Schrödinger which demolished centuries of belief about the nature of matter and the way the physical world is put together. It had been apparent for a quarter of a century that something was very wrong with the traditional concept of material objects on the atomic and molecular scale, but it took the publication of a specific equation—Schrödinger’s equation—to sweep away the old picture of matter and open the path to an entirely new way of thinking about the physical universe. What emerged, following a few years of frenzied analysis by the world’s leading physicists, was the birth of an entirely new scientific discipline called quantum mechanics. It transformed our understanding of reality, reshaped the landscape of science, and gave birth to entire new industries that have powered economic growth for decades.

Quantum mechanics didn’t spring ready-made into Schrödinger’s mind. His equation was an attempt to grapple with a plethora of oddities and paradoxes that pervaded the world of pre-quantum—now known as classical—physics. These oddities hinted that something was seriously amiss with scientists’ understanding of matter and forces: impossible stars, atoms that shouldn’t exist, heat radiation that ought to incinerate the universe—a puzzling array that nobody understood until it all fell into place in the mid-1920s.

Schrödinger was not alone in his endeavors. At that time, others were grappling with the baffling internal structure of atoms—most notably Werner Heisenberg, who was taking a different mathematical approach from Schrödinger, yet one that eventually turned out to be equivalent.

*

The genesis of the basic quantum concept was in 1899. It was just another frustrating day at work for Max Planck, the German physicist who took the first, decisive, leap. Planck had for years struggled to understand the problem of runaway energy at high frequencies in heat radiation. He had long been wrestling with the equations for thermodynamics and electromagnetism in an attempt to combine them in a way that matched the measured spectrum of heat radiation, which rises to a peak and then falls in a distinctive manner. In the end, the solution to the conundrum was actually disarmingly simple, banal even, and for Planck totally gratuitous. He tried out what would happen if radiant heat energy couldn’t be emitted in any amount, but only in discrete little packets, which he called quanta—deriving from the same Latin root as “quantity,” or “portion”: This is the origin of the term. (A quantum of heat—and light—radiation is usually called a photon. In popular parlance, a quantum leap often means a big change, whereas most photons are extremely small units of energy.)

Each quantum, Planck proposed, possessed an energy in proportion to the frequency of the wave: higher frequency, more energy. That required Planck to introduce a new fundamental constant of nature into physics, one that fixes the scale, giving us the actual value of a quantum of energy for a specified frequency. It is, unsurprisingly, referred to as Planck’s constant. This was a drastic step, and Planck implemented it reluctantly, in the manner of a mathematical fudge—a computational maneuver—rather than as a serious hypothesis about nature. But it worked. If radiant heat behaves like a gas made up of particles rather than continuous waves, sharing out the energy democratically is straightforward.

Here is a rough explanation of how Planck’s proposal solves the problem. Since the energy going into an electromagnetic wave is not, according to Planck, infinitely divisible, the minimum amount a given frequency could possess is one quantum. However, a wave of that frequency might possess no energy at all, i.e. no quantum. The democracy principle is just a statistical distribution, so it’s okay if some wave frequencies don’t have any energy, while others have one, two, three quanta of energy, and so on, so long as the average comes out right. Obviously, the higher the frequency (requiring more energy per quantum, according to Planck’s hypothesis) the smaller the fraction of waves that, on average, can receive one quantum, even fewer two, etc. The upshot of this requirement is that the high frequency waves are suppressed in energy. It’s a bit like a lottery in which just one person gets the first prize of $100, 10 people get the second prize of $10 each and 100 people get the third prize of $1. That is equipartition of sorts: Each category of prize winners receives the same $100 in total. A simple calculation shows that, with Planck’s proposal, the energy spectrum of heat radiation at a given temperature matches the shape of his constant. Voilà! Problem solved, just like that—at the stroke of a pen—a bit of elementary algebra. But of course, the problem was far from solved. Planck was right, and the spectrum now bears his name in recognition, but in science it’s not enough to say, “I’ve been at this for years and I’m sick of the fact that the equations won’t give the right answer, so I’ll change one of them.” What that amounts to is altering a basic law of nature, a drastic step that you have to justify. Planck himself was less than ecstatic: He described his quantum hypothesis as “an act of despair.” In fact, he never came to fully accept quantum mechanics, the theory he helped incubate.

The next act of the drama came when Einstein offered a neat explanation for the photoelectric effect. That was 1905, Einstein’s annus mirabilis, in which he laid the foundations for not merely one revolution in physics, but three. Similar to Planck’s suggestion that heat radiation is emitted in discrete quanta, Einstein also suggested that light comes in discrete packets, with energy proportional to their frequency. (Radiant heat and light are both forms of electromagnetic radiation, differing only in frequency.) When a stream of these light quanta—photons—hits a metal surface, electrons are kicked out, one electron per photon. If, as hypothesized by Planck and Einstein, higher frequency photons have more energy than lower frequency, then they will produce faster-moving electrons, but not more electrons. If you turn up the intensity of the light (use more photons), then you do get more electrons liberated—a stronger electric current—as observed in experiments. It was actually this work, rather than his famous theory of relativity, that later earned Einstein the Nobel Prize in Physics. Yet—as we shall see—Einstein, like Planck, never came to fully accept quantum mechanics.

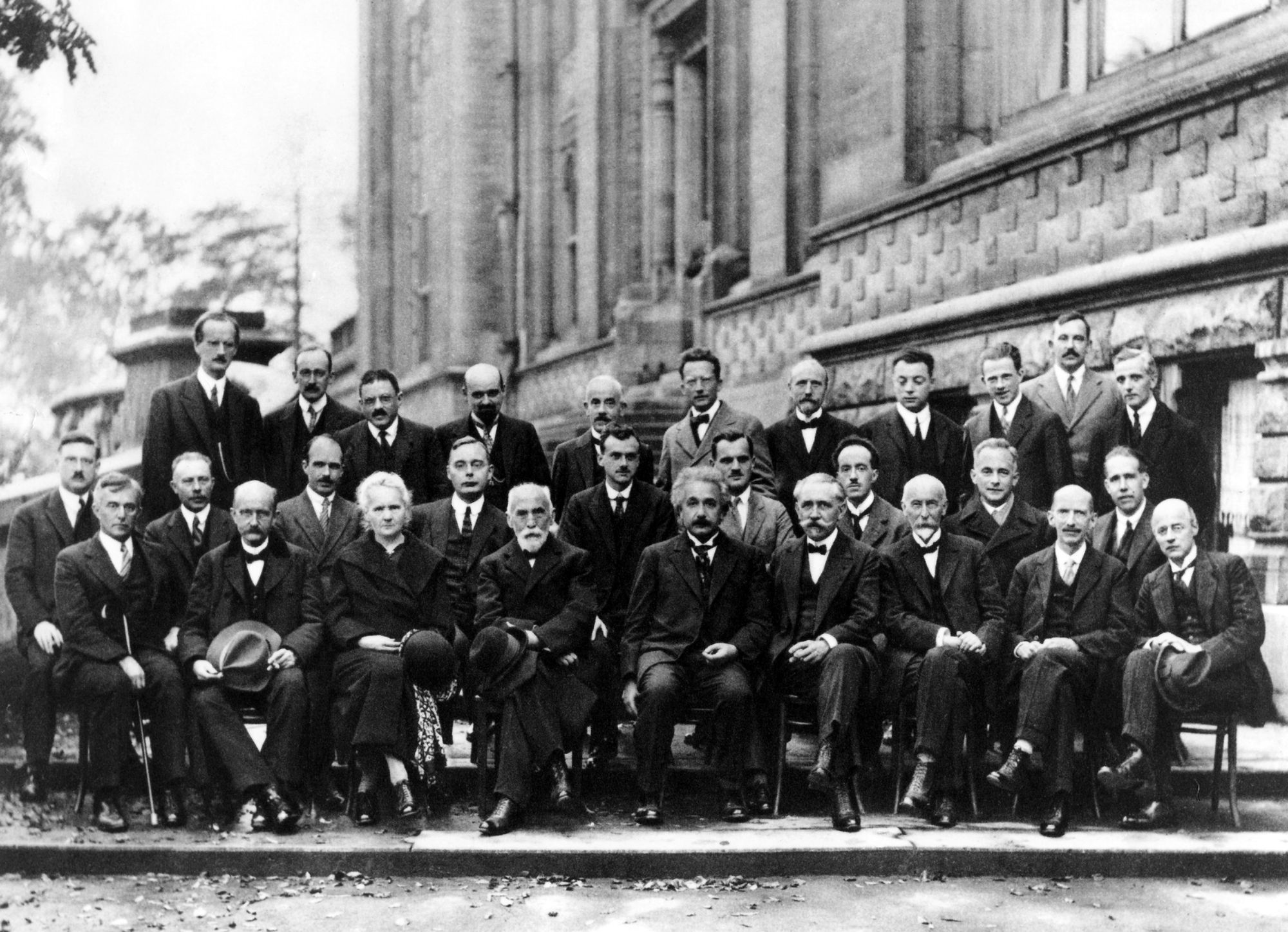

Scientists gathered at the Solvay Conference in 1927—including Einstein, Planck, Schrödinger, and Heisenberg.

Photo: Benjamin CouprieThe final piece of the jigsaw came in 1913. By this time, the “quantum” idea of energy coming in discrete packets was out there, although it was by no means generally accepted, given it was still completely unexplained. The Danish physicist Niels Bohr borrowed the basic concept and proposed that the orbits of electrons in atoms are also “quantized,” that is, they are confined to certain discrete radii and energy levels as they go around the nucleus. Bohr’s suggestion was not totally ad hoc. If one accepts with Planck and Einstein that light comes in little discrete packets, then the source that emits or absorbs the packets (e.g. atoms) must presumably also have discrete energy levels. In this picture, an electron may jump from one level to another, corresponding to a photon being emitted or absorbed. This early skirmish with atomic structure was a primitive model that made no attempt to account for why quantized energy levels exist, but Bohr proposed a formula for the energies of the levels that strikingly conformed with some known experimental results about the light spectra of hydrogen and helium. Bohr suggested that atoms would not collapse because there was a definite smallest orbit with the lowest energy—called the ground state—below which the electron couldn’t go, and any further radiation emission was forbidden—for what at the time were unknown reasons.

Taken together, the proposals of Planck, Einstein and Bohr set the scene for what would eventually become a fully worked-out theory of quantum mechanics. But their various proposals left a lot to be desired. The fact that light is “quantized,” that it comes in discrete lumps of energy, flew in the face of overwhelming evidence that light is a wave. This caused great confusion, for how could something be both a wave and a particle? Later, physicists would refer to “wave-particle” duality, but for two decades nobody could really wrap their heads around the idea. Nor could the existence of quantized energy levels in atoms, and in particular the existence of a lowest level, or ground state, be reconciled with any known principles.

Then came a new development. In 1924, a French aristocrat, the splendidly named Louis Victor Pierre Raymond, 7th Duc de Broglie, proposed in his PhD thesis that electrons could sometimes behave like waves. It was a bold concept, given that electrons are quite obviously little particles. According to Planck and Einstein, light waves can sometimes behave like particles, and conversely, according to de Broglie, particles of matter can also behave like waves.

De Broglie’s matter-wave hypothesis was a huge leap, but he was spot on. It took a few years for his proposal to be definitively confirmed experimentally, but in the meantime, building upon de Broglie’s basic idea, Schrödinger presented his famous equation to describe how the mysterious matter waves propagate. He gave the strength (known as the amplitude) of the wave the Greek symbol psi, written ψ, in his original paper and the convention stuck. Schrödinger proposed that the wavelength—the distance between successive peaks of the wave—depends on the particle’s momentum. For example, fast-moving electrons should have shorter wavelengths—be more bunched up—than slow-moving, less energetic, electrons. And experiments confirmed that was right. This was the decisive breakthrough, following which everything fell into place.

Electron waves readily explained the mystery of atomic energy levels. Quivering wave patterns envelop the nucleus, vibrating at distinct frequencies like the notes of musical instruments, each pattern corresponding to a specific energy level. The numbers match up brilliantly, recovering Bohr’s ad hoc formula and more. The patterns have different shapes and sizes; the simplest pattern is a compact symmetric cloud that hugs the nucleus most closely; that is the ground state, below which there are no more available patterns on offer. Schrödinger’s equation could also be used to describe unbound electrons colliding with atoms, scattering off them or being captured, or moving through crystals, or bouncing away from each other. In addition, it described other particles, including entire atoms and molecules, accurately predicting their vibrational and rotational motions and the forces that bind them. Applications of Schrödinger’s equation came so thick and fast it was said that even a second-rate physicist could do first-rate work. The stage was set for what was to become the Golden Age of physics.

Mingled with the heady excitement, however, was a deep sense of unease, not to say outright bewilderment, concerning the sweeping implications for the nature of reality. Schrödinger’s equation requires us to abandon the simplistic assumption that the atomic world is merely a scaled-down version of the everyday world and to accept that it is something else entirely, something defying common sense.

Quantum mechanics would become the dominant scientific story of the twentieth century: a sensationally successful branch of physics with applications across all the sciences and engineering, but supported by a detailed theory that seemed to strike at the very root of rational inquiry about the physical world. It was the epitome of the “paradigm shift,” according to which true revolutions do not merely change a few technical details—they reconfigure the entire conceptual foundations of the subject. The reason quantum mechanics was so hard to grasp, and remains controversial to this day, is because it demands that we part with long-cherished notions about the way the physical universe is constructed and our place within it. ♦

Adapted from Quantum 2.0: The Weird Physics Driving a New Revolution in Technology. Copyright (c) 2025 by Paul Davies. Used with permission of the publisher, The University of Chicago Press. All rights reserved.

Subscribe to Broadcast